Unexpectedly participated in a class action lawsuit in the USA

Recently, I unexpectedly encountered a justice system in the USA.

- In 2017-2018, when there was a crypto boom, I invested a little in a mining startup: I purchased their tokens and one hardware unit.

- The startup went up and began to build a mega farm, but it didn't work out — the fall of Bitcoin coincided with their spending peak, the money ran out, and the company went bankrupt. It's funny that a month or two after filing for bankruptcy, bitcoin played back everything. Sometimes you're just unlucky :-)

- I had already written off the lost money, of course. I acted on the rule "invest only 10% of the income you don't mind losing."

- Since everything legally happened in the USA, people gathered there and filed a class action lawsuit.

- I received a letter stating that I would be automatically among the plaintiffs if I did not refuse. I did not refuse; when else would I get an opportunity to participate in a class action?

- Everything calmed down until 2024.

- In the spring, another letter came: "Confirm the ownership of the tokens and indicate their quantity. We won and will share the remaining among all token holders proportionally, minus a healthy commission to the lawyers."

- But how do I confirm? More than five years have passed. The Belarusian bank account is closed, the company's admin panel is unavailable, and there was no direct transaction in the blockchain—I paid in Bitcoin directly from some exchange (although it is not recommended to do so).

- I found an email from the company confirming I bought tokens (without the amount) and printed it as a PDF. I attached it to the application with screenshots of the transactions from the exchange for the related period. I gave the address of my current wallet, where these tokens lie dead weight. I sent everything.

- Today, I received $700 in my bank account. Of course, this is not all the lost money, nearly 25%, maybe slightly more.

What conclusions can be drawn from this:

- Sometimes, you just don't get lucky in your business.

- Keep all emails. You never know what and when will come in handy.

- Class action lawsuits work and do it in an interesting way.

- Justice in the USA works slowly but, apparently, inevitably and unexpectedly (for me) loyally to minor participants in the conflict. At least sometimes.

Grainau: hiking and beer at 3000 meters

How it all looks from the ground.

For her vacation, Yuliya decided to show me the beautiful German mountains and took me for a couple of days to Grainau — it's a piece of Bavaria that's almost like Switzerland. At least, it is similar to the pictures of Switzerland that I've seen :-D

In short, it's a lovely place with a measured pace of life. If you need to catch your breath, calm your nerves, and enjoy nature, then this is the place for you. But if you can't live without parties, you'll get bored quickly.

What's there:

- The highest mountain in Germany plus a couple of glaciers.

- There's skiing in winter. If you really need it, you can find a place to ski in summer, but the descent is short, and the lifts are turned off.

- A large clean lake and a couple of smaller ones.

- A huge number of trails for hiking.

- A huge number of waterfalls, streams, and a couple of mountain rivers.

- Restaurants with beer.

- Beautiful fallen trees in the forests, private property, fences, cows with bells, and "racing tractors" (I don't know how to name this phenomenon better, but tractors are moving fast there :-D).

This is briefly, and now in detail.

Migrating from GPT-3.5-turbo to GPT-4o-mini

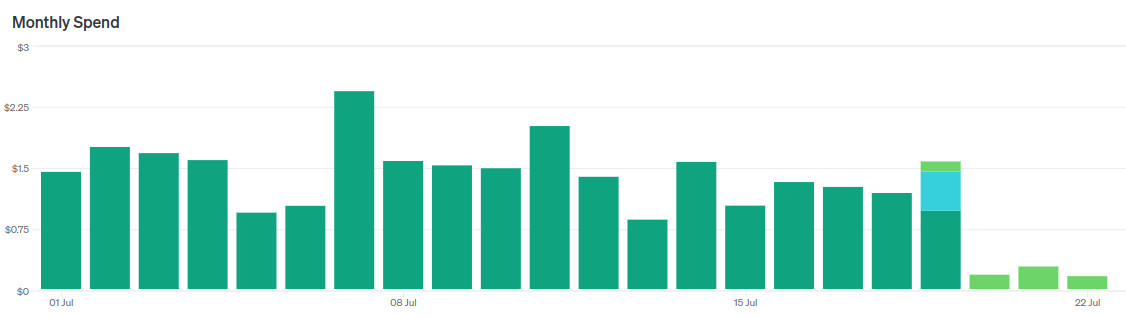

Guess when I switched models.

Recently OpenAI released GPT-4o-mini — a new flagship model for the cheap segment, as it were.

- They say it works "almost like" GPT-4o, sometimes even better than GPT-4.

- It is almost three times cheaper than GPT-3.5-turbo.

- Context size 128k tokens, against 16k for GPT-3.5-turbo.

Of course, I immediately started migrating my news reader to this model.

In short, it's a cool replacement for GPT-3.5-turbo. I immediately replaced two LLM agents with one without changing prompts, reducing costs by a factor of 5 without losing quality.

However, then I started tuning the prompt to make it even cooler and began to encounter nuances. Let me tell you about them.

Computational mechanics & ε- (epsilon) machines

I found a few new concepts for tracking.

Computational mechanics

There is computational mechanics, which deals with numerical modeling of mechanical processes and there is an article about it on the wiki. This post is not about it.

This post is about computational mechanics, which studies abstractions of complex processes: how emergent behavior arises from the sum of the behavior / statistics of low-level processes. For example, why the Big Red Spot on Jupiter is stable, or why the result of a processor calculations does not depend on the properties of each electron in it.

ε- (epsilon) machine

The concept of a device that can exist in a finite set of states and can predict its future state (or state distribution?) based on the current one.

Computational mechanics allows (or should allow) to represent complex systems as a hierarchy of ε-machines. This creates a formal language for describing complex systems and emergent behavior.

For example, our brain can be represented as an ε-machine. Formally, the state of the brain never repeats (voltages on neurons, positions of neurotransmitter molecules, etc), but there are a huge number of situations when we do the same thing in the same conditions.

Here is a popular science explanation: https://www.quantamagazine.org/the-new-math-of-how-large-scale-order-emerges-20240610/

P.S. I will try to dig into scientific articles. I will tell you if I find something interesting and practical. P.P.S. I have long been thinking in the direction of a similar thing. Unfortunately, the twists of life do not allow me to seriously dig into science and mathematics. I am always happy when I encounter the results of other people's digging.

Dungeon generation — from simple to complex

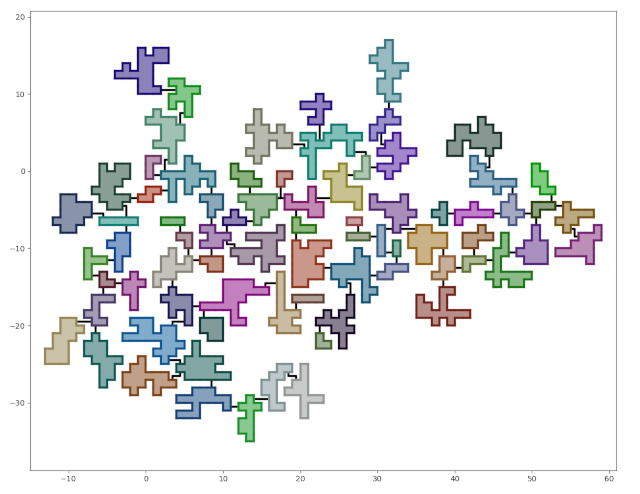

What we should get.

This is a translation of a post from 2020

This is a step-by-step guide to generating dungeons in Python. If you are not a programmer, you may be interested in reading how to design a dungeon [ru].

I spent a few evenings testing the idea of generating space bases.. The space base didn't work out, but the result looks like a good dungeon. Since I went from simple to complex and didn't use rocket science, I converted the code into a tutorial on generating dungeons in Python.

By the end of this tutorial, we will have a dungeon generator with the following features:

- The rooms will be connected by corridors.

- The dungeon will have the shape of a tree. Adding cycles will be elementary, but I'll leave it as homework.

- The number of rooms, their size, and the "branching level" will be configurable.

- The dungeon will be placed on a grid and consist of square cells.

The entire code can be found on github.

There won't be any code in the post — all the approaches used can be easily described in words. At least, I think so.

Each development stage has a corresponding tag in the repository, containing the code at the end of the stage.

The aim of this tutorial is not only to teach how to generate dungeons but to demonstrate that seemingly complex tasks can be simple when properly broken down into subtasks.